In two separate cases recently Uber has, or has talked about, abusing its information about their customer’s movements.

In two separate cases recently Uber has, or has talked about, abusing its information about their customer’s movements.

First a Buzzed reporter Johana Bhuiyan was told that she was tracked on the way to a meeting by Josh Mohrer, general manager of Uber New York.

Next Emil Michael, SVP of business for Uber, talked at a private dinner about the possibility of using the information Uber has about hostile reporters to gather dirt on them.

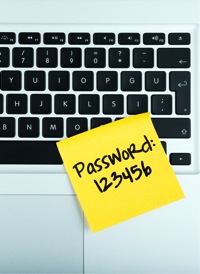

Apparently Uber has an internal tool called “God View” which is fairly widely available to employees and allows tracking of any car or customer. Obviously such information must exist within the Uber systems for them to operate their business, but this access for personal or inappropriate business purposes is very worrying, possibly putting the security of customers at risk.

While Uber is the company that got caught, the potential for this kind of abuse exists in a tremendous number of businesses. We give sensitive personal information to these companies in order to allow them to provide the services that we want, but we are also trusting them to treat the data appropriately.

Last year there was a scandal within the NSA about a practice called “LOVEINT”. The name is an inside joke. Signals intelligence is called “SIGINT”, human intelligence is called “HUMINT”, so intelligence about friends and lovers was called “LOVEINT”. In practice, people within the NSA were accessing the big national databases to look up information on current or former partners, celebrities, etc.

The exact same risk exists within all of these businesses, but generally with far weaker internal controls than in the government.

I think that the solution to this is not to insist on controls that would be difficult to enforce, or to ban the keeping of information which they really do need, but rather to give users visibility into when their information is viewed, why, and by whom. Abuse could then be quickly detected and exposed, while allowing the business to continue to operate as they need to.

https://www.youtube.com/watch?v=XM8_JeVHwwo

[powerpress]

Good articles for more info from: The Verge, Forbs, & Forbs again

In two separate cases recently

In two separate cases recently  Apple is getting taken to task for a couple of security issues.

Apple is getting taken to task for a couple of security issues. Since it was introduced, Apple has had the ability to decrypt the contents if iPhones and other iOS devices when asked to do so (with a warrant).

Since it was introduced, Apple has had the ability to decrypt the contents if iPhones and other iOS devices when asked to do so (with a warrant).